Anthropic appears to have accidentally shipped a .map

file with their Claude Code npm package, exposing the full readable

source of the CLI tool. The package has since been pulled, but not

before it was widely mirrored and dissected.

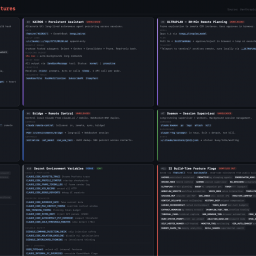

A few interesting findings from the leaked code:

- Anti-distillation mechanisms:

The client can request “fake tool” injection to poison training data for anyone scraping API traffic. There’s also a system that summarizes intermediate outputs with signed blobs, so recorded traffic doesn’t contain full reasoning chains. Both mechanisms are feature-flagged and relatively easy to bypass. - “Undercover mode”:

A built-in mode prevents the model from revealing internal codenames or even mentioning “Claude Code.” Notably, it cannot be force-disabled in external contexts, meaning AI-generated contributions may intentionally appear human-authored. - Frustration detection via regex:

Instead of using an LLM, user frustration is detected with a simple regex matching phrases like “wtf,” “this sucks,” etc. Cheap and fast, if a bit ironic. - Client attestation at the transport layer:

Requests include a placeholder that gets replaced with a hash by Bun’s native HTTP stack (in Zig), allowing the server to verify requests come from an official binary. This acts like lightweight DRM for API access, though it’s gated behind flags and not airtight. - Operational inefficiency:

A comment notes ~250K API calls/day were being wasted due to repeated failures in a compaction routine. Fixed by adding a simple failure cap. - KAIROS (unreleased):

The code references a heavily gated autonomous agent mode with background workers, scheduled tasks, memory distillation, and GitHub integration—suggesting an always-on agent system in development.